Macro-Economic Modelling and Its Uses

By Bridget Rosewell

An International Macro Symposium conference this week hosted by the ESRC and the Oxford Martin School has brought home to me how little things have changed in some quarters. There is still a belief that with some tweaks the old modelling frameworks can capture the elements that were missing before the crisis, and a deeply held belief that agents that maximise are the key components of the economy.

What became clearer to me in the discussions in the symposium is why I think this is wrong. I’ll start with maximisation, partly because it is such a key part of the economist’s toolkit. Surely, we say, people will learn, and will find out how to describe reality and react to it in ways that can be captured in models. To the extent that they fail, this is already dealt with by thinking in terms of bounded rationality and limited information. And of course agents can differ in their tastes in some way. But this misses the point. All such approaches fail to distinguish between individual and collective behaviour. Once my choices are affected by yours, outcomes can exhibit contagion and cascades and the order in which decisions are made in time and space will affect any individual outcome. In these circumstances, it is not clear what role maximisation actually plays in defining a macroeconomic outcome. Sure people may intend to do so, but most decisions are not being made in any way that such a hypothesis would recognise and each decision in turn informs the constraints on the next one. So the path taken would be hard to characterise in equilibrium terms.

What became clearer to me in the discussions in the symposium is why I think this is wrong. I’ll start with maximisation, partly because it is such a key part of the economist’s toolkit. Surely, we say, people will learn, and will find out how to describe reality and react to it in ways that can be captured in models. To the extent that they fail, this is already dealt with by thinking in terms of bounded rationality and limited information. And of course agents can differ in their tastes in some way. But this misses the point. All such approaches fail to distinguish between individual and collective behaviour. Once my choices are affected by yours, outcomes can exhibit contagion and cascades and the order in which decisions are made in time and space will affect any individual outcome. In these circumstances, it is not clear what role maximisation actually plays in defining a macroeconomic outcome. Sure people may intend to do so, but most decisions are not being made in any way that such a hypothesis would recognise and each decision in turn informs the constraints on the next one. So the path taken would be hard to characterise in equilibrium terms.

The financial crisis illustrates the implications of having both individual and collective decision making. Although the standard model tends to assume there is one representative agent, who takes the market price, this in fact implies that there are many individuals all with slightly difference views who are together making the market place by buying and selling. Individuals differ. Once all individuals have the same view, there is no market and no trading. In the financial crisis markets shut down because everyone shared the view that they did not know where the bad debts were and that were more bad debts than they had previously thought. Markets ceased to move prices to reflect an estimated risk and simply ceased to exist at all. This is what caused the liquidity crisis in the banking sector and which governments had to step in to manage.

So one important element which a description of the macro economy must capture is the sort of circumstances in which such shifts may occur when individual variation is replaced by collective consensus.

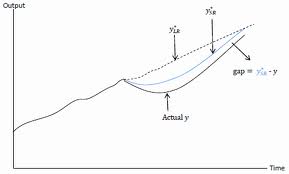

The other important element which models need to capture is risk at the macro level. Individuals may themselves make risk reward trade-offs, whether rationally or otherwise, but these individual decisions may lead to a variety of outcomes depending on elements of randomness, the order in which decisions are taken, and how agents are connected. Models which include such elements will produce a different result every time they are run, and several such models, from the Bank of England as well as J-P Bouchaud who uses his in trading decisions, were presented at the conference. Understanding a range of potential outcomes rather than a single point of history gives both a distinct modelling approach with probabilistic behaviours, and a distinctively different take on policy.

If a given set of behaviours can potentially give rise to a variety of outcomes, of which the past we observe is only one, then any policy choice can also give rise to a range of outcomes. Is that range wide or narrow? Is there a high risk of an undesirable outcome or are all outcomes acceptable? We know that in practice policies do not always deliver what was hoped. Interest rate cuts sometimes give the desired impact on exchange rates but quite often they do not. Even the sign of the change can be uncertain. It is an abdication of responsibility to assume that the unexpected is simply the result of random shocks off model. It would be much more satisfactory to start to build in some of the randomness and indeed better to understand how it is formed. Many of the models developed in the econophysics community have these properties. They need to be taken seriously.

Finally, it seems that there remains confusion about the difference between explanation and prediction. David Hendry presented his ideas about data driven modelling which starts by identifying where shifts in the data occur and how early signals of such shifts can be captured with high frequency information. No behavioural hypotheses are needed here, so this is not about explanation but about the ability to predict. All the information about a series of interest is of course included in its history – and its prediction can be generated by trends and patterns within it sometimes very successfully. Understanding how it is correlated with other data may help to understand the data but will only help in prediction if it is possible to predict the correlates. Using high frequency, survey data, or market information may indeed help to do this. It is not explanation, but neither does it have to be.

However, I don’t think that this is really grasped by the macro modelling community who want explanation, prediction and policy modelling all in the same box. If that box continues to be populated only by equilibrium models, then we will not have learnt anything. I look forward though to the next conference where I hope dynamic models will have ranges of outcomes, and it will be possible to explore the circumstances where markets move from a normal variety of outcomes to ones where they cease to exist. Then we will have moved on.

You say:

“However, I don’t think that this is really grasped by the macro modelling community who want explanation, prediction and policy modelling all in the same box.”

I think this is a very important point and, from my experience, is also something that one even finds within the complexity modelling community. A surprising number of papers describing complexity-type models are unclear as to which of these three applications of a model – or possibly others – are being pursued.

Conversely you have constant debates where people state that the “roll of any model” should be to privilege one aspect. For example I have heard some say “the purpose of a model is prediction only” others saying “it’s primarily about explanation”.

As you say, there are many ways to use a model and it depends on the aims of the work. I think we, in the complexity community, could benefit from being clearer about this in our work. Following this line of thinking a good paper on the use of models (when they are not predictive) is given by the a known paper by Josh Epstein:

“Why Model?”

http://jasss.soc.surrey.ac.uk/11/4/12.html